Navigating AI dependency on a tight budget

28 Apr 2026

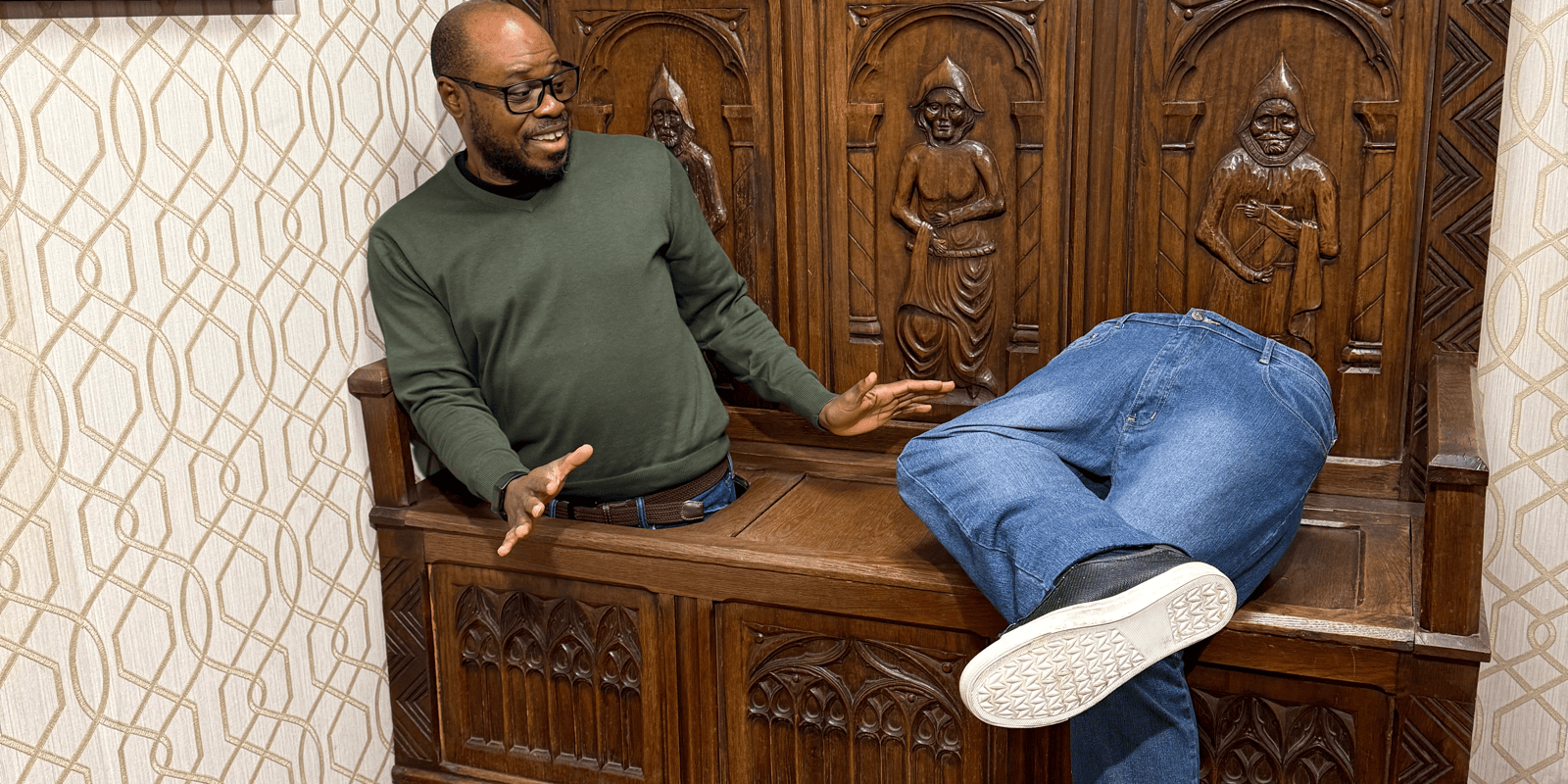

Old Town Prague, January 2026 @babafemio

I’ve been closely observing the business models driving the Frontier AI labs. The capital expenditure being deployed to build multi-billion-dollar data centres is unprecedented. While the fact that GPUs are here to stay is undeniable, it raises a core economic concern: How will these providers generate sustainable returns?

As the infrastructure matures, the market forces will inevitably mandate a pricing structure that maximises profit. This steady progression toward cost optimisation warrants caution when becoming overly dependent on proprietary models such as Claude Opus 4 or GPT 5. These models are undeniably powerful accelerators, but the central question for developers, startups, and enterprises remains: At what total cost of ownership are we getting hooked?

The looming cost curve

The early signals are already visible. Ben Thompson touches on this in his piece on the opportunity cost of compute, and we are already seeing trade-offs emerge. For instance, OpenAI’s pivot away from Sora signals a reallocation of compute toward higher-margin enterprise workloads, essentially “Fortune X” companies and well-funded startups that can absorb Pro and Max subscription pricing.

The approach is becoming clearer, lock in high-value enterprise users who increasingly rely on these systems because they’ve embedded them into core workflows, writing, presentations, analysis, and more. Recently, Anthropic also adjusted access to its Claude Code Pro plan, effectively increasing costs for users overnight. However, it claims it was running a test on a small percentage of users. The Register, in a recent post, takes the conversation to another level, suggesting that switching costs would be high if and when you decide to switch models or AI service providers.

So the question becomes: what does an individual, a startup, or even a large organisation do when building solutions for customers in a world where AI dependency is rising but budgets are still constrained? But never fear… There are a few paths forward.

The open-source advantage

First, open-source models like Kimi 2.5, Minimax 2.5 and GLM 5 are not significantly behind frontier models like Claude Opus 4.5 in practical performance for many use cases. That slight performance gap can often be closed with better context engineering. The resulting cost savings and loss of vendor dependence are a game-changer, maintaining critical agility as the cost of simple tasks continues to rise.

Efficiency over power

Second, you can adopt a layered approach, use a cheaper, capable model for ideation, structuring, and context building, then pass a refined prompt to a more advanced model for a final one-shot high-stakes output. In addition, local models like Gemma 4 already perform well for many everyday and operational tasks. If you are building software today, architecture remains one of the most important decisions, and since core architectural principles haven’t changed dramatically, less expensive models can still be highly effective at that level of thinking.

How value is shifting

The narrative surrounding AI often focuses solely on performance ceilings and costs. But as we navigate this period of rising dependency, the true measure of innovation is shifting. The most valuable asset is no longer the most powerful model, but the most resilient, flexible, and architecturally sound AI stack.

The coming years will favour the strategic builders, those who treat AI not as a single, indispensable black box, but as an adaptable, composite utility powered by diverse, cost-effective components. The future of AI development belongs not to those who pay the highest subscription fees, but to those who leverage the best harnesses and design the most intelligent systems.